The probability scale (F)

Definition

All Probabilities are between 0 and 1. If something has a Probability of 0, it means it will never happen. If something has a Probability of 1, it means it definitely will happen.

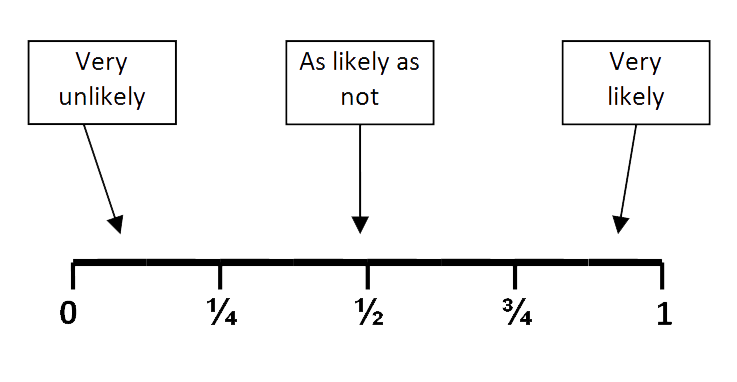

The Probability Scale runs from 0 to 1.

Diagram

Note

The Probability Scale is basically a number line running from 0 to 1. The closer an event is to 0, the less likely it is to happen. The closer it is to 1, the more likely it is to happen. In the middle, with probability = 1/2, it's got the same chance of happening as not happening.

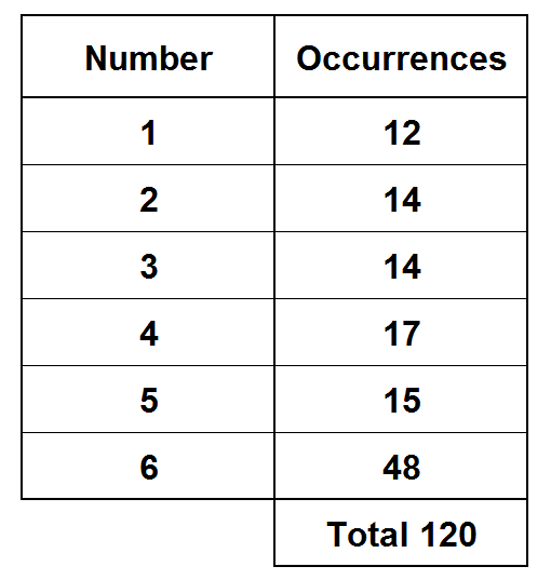

The sum of probabilities for all possible outcomes is always 1. So, if P(x) is the probability that a rolled dice lands with the number x uppermost, then P(1) + P(2) + P(3) + P(4) + P(5) + P(6) = 1

Method

Probabilities can be expressed as fractions or decimals (or sometimes percentages).

Example

Put these events in order of their probability of happening starting with the most likely first: A tossed coin lands heads up A random card picked from a deck is an Ace A dice is tossed and the number is 2 or greater

Solution

Let's look at each in turn.

The chance of a coin landing heads up is 1/2 (there are two outcomes and both are equally likely to happen)

In a deck, there are 52 cards in total, but only 4 of them are Aces so there's only a 4/52 chance of picking an ace.

On a dice, there are the numbers 1 - 6 so, 5 of those outcomes are 2 or greater. So, the chance of rolling a number 2 or greater is 5/6

So, in order of happening with the most likely first we have: A dice is tossed and the number is 2 or greater A tossed coin lands heads up A random card picked from a deck is an Ace